In this second part of the series about incremental load, we will examine the source based incremental load strategy in detail. First we will look into the data flow of this load strategy and then we will do a step by step implementaiton of this load strategy on an existing table in timeXtender. It is assumed that you are already know what a full load is, but if you don’t please see the first post in this series.

Source Based Incremental Load limits the number of records that are loaded into the raw table, hence decreasing the time spent on data transfer. Since only new or modified records are present in the raw table, the data cleansing and data quality process will also decrease compared to a full load strategy. Source based incremental load cannot handle deletes from the data source. Records deleted in the source system will be present in timeXtender until another full load is made.

The foundation for source based incremental load, is one or more guaranteed incremental value field(s) of numeric of datetime data types. With this load strategy, we will persist the maximum values for these incremental values after each execution, and use them as dynamic filters for the next execution. Automatic incremental value fields, also known as identity or auto number are great candidates for incremental loads, just as automatic fields such as created date or modified date are equally good. On the other hand you need to be careful when using “application values” for which values can be entered manually. With manually entered values, we are very often not guaranteed that the values will be incremental.

If source based incremental load is based on a non guaranteed incremental value, it will very likely leed to gaps in the extracted data. The direct consequence of data gaps are loss of confidence in the data warehouse.

The data flow for Source based incremental load strategy is:

- Delete all records from the raw table.

- If a Last Max Value for the incremental rules field(s) exists, those are applied as a where-clause in next step. If not, a full load is made.

- Insert new or modified data from the source table in the raw table.

- Perform data cleansing on all records in the raw table.

- Perform data quality checks on all records in the raw table.

- If a record exists only in the Raw table, then the record is inserted in the valid table.

- If a record exists in both Raw and Valid, then the record in the valid table is updated.

- Insert/Update Last Max Value for the Incremental Rules field(s).

How to enable source based incremental load in timeXtender

In the following we will implement a source based incremental load strategy on a single table. We will use the Invoices table from the Happy Hotels demo and training database. A couple of assumptions about the scenario:

- Invoices are by definition considered as incremental, as we wouldn’t expect an invoice to be modified.

- We expect that the numbers for invoices are assigned sequentially

- We expect that invoices are created sequentially, thus we wouldn’t create an invoice with an earlier invoice date than the latest created invoice

Step 1 – Enable source based incremental load

On the destination: To enable incremental load, right-click the table and select the Advanced Settings option on the context menu. In the Advanced Settings dialog, mark the Source Based checkbox as shown below.

Step 2 – Define a primary key

A primary key is needed to be able to determine if an incoming record is a insert or update (if the record is new or an existing record was modified in the source). In this example it is a composite key, made of the fields HotelID and Number. Please be note that a primary key in this context is solely used to check for uniqueness and will not be implemented as a physical key in the database.

On the destination: To select/deselect a field as a primary key, right-click the field and select the Include in primary key or Exclude from primary key context menu option. This is done on the table instance in the destination area, as it affects the

Step 3 a – Add incremental selection rule

Incremental selection rules represents the field(s) that contains the incremental value(s). The rule of thumb is, that everything that is on a single line represents a single rule.

On the source: To add a rule, right-click the table and select the Add incremental selection rule context-menu option. Then select the field(s) that represents a single rule.

Step 3 b – Add incremental selection rule

On the source: To add a rule, right-click the table and select the Add incremental selection rule context-menu option. Then select the field(s) that represents a single rule.

To define a overlap of one day, specify a value of 1 in the days field under subtraction

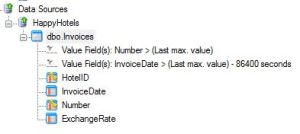

Once both incremental selection rules have been defined, the table in the source area will look similar to this

Note: The ability to define overlaps is a very important part of source based incremental loading. It is used to prevent data gaps caused by long running transactions and dirty reads, by subtracting a fixed value from the current maximum value after last execution. The subtraction forces the starting point for the next execution back to start from an earlier point than indicated by the last maximum value. The size of the subtraction is determined by measuring the actual length of transactions, but this will be a separate topic in another post.

Step 4 – Deploy and execute

On the destination: To deploy and execute, right-click the table and select the deploy and execute context menu option.

A new schema changes step will appear in the deployment process, after incremental load has been enable. This extra step protects the table from unnecessary future deployments and will be run as the first step for any future deployments. When a structure change is done to the table that requires a new full load, it will warn us.

We will also see this information, telling us that a full load is needed to establish a reference load for future incremental loads.

Step 5 – Verify

We should see the immidate results after the first incremental load, as it would have only extracted and processed those records meeting the selection rules for inserts and updates. An easy way to verify the results after last execution is to use the preview feature on the destination table.

The valid table for this example contains 10000 records

The raw table contains only 12 records, which are the results of the minus 1 day overlap

And finally the incremental table displays the persisted maximum values for the incremental rules

In the next post you will learn in detail about the target based incremental load strategy and how to implement this in timeXtender.

[…] based incremental load really only comes in play, when there is no way at all to implement a source based incremental load strategy. In other words, Target based incremental load is used, when there is no way at all to identify new […]

Greetings Thomas,

I tried to follow your example with an existing DW where we are currently doing a full load and the dataset is getting larger and slower to process. When I right-clicked on the traget table there was not an “Advance Settings” for me to click on. Can you tell me how I access this option? I also see the “Add Incremental Selection Rule” option but it is greyed out. Any thoughts/ What am I missing?

Thanks

Jose

Hi Jose

The advanced settings in the context menu, is controlled by an option in the window and menu settings. Please refere to the following Knowledge Base article https://timextender.zendesk.com/entries/20860693-why-am-i-missing-context-menu-options

As for the incremental selection rules, they will be active once you have enabled source based incremental load under advanced settings for a table.

Best Regards

Thomas

@Jose:

Step 1 from above is performed on your Data Warehouse tables or Business Unit tables. Once this is enabled, you can use “Add Inceremental Selection Rule” on the source table that feeds your Data Warehouse/Business Unit table.

Based on Thomas’ steps above, Step 1 and 2 are performed on a Business Unit table. Step 3 is performed on the Data Sources table.